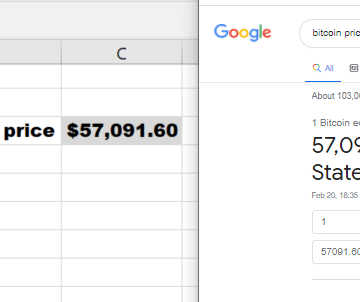

Recently the price of Bitcoin has skyrocketed again and many people keep refreshing their phones and browsers to be on top of current price fluctuations. However, why not use Excel – and for that purpose, how to get Bitcoin in Excel using a formula? There is no ready formula in Excel but it turns out […]

Web

Excel WEBSERVICE and FILTERXML functions explained

The Excel WEBSERVICE and Excel FILTERXML Worksheet functions can be used to pull Internet data from a URL into a cell in a Excel spreadsheet. While the first pulls raw HTML data the second allows you to filter XML formats. Excel up till now has been mostly an offline application. Although, you can use VBA, […]

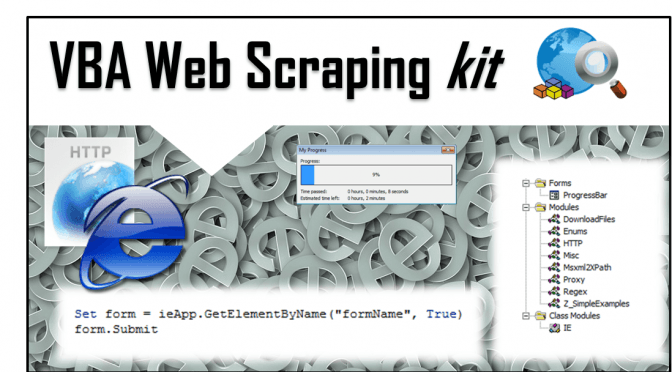

Web Scraping Kit – use Excel to get that Web data

I am proud to present the next Kit coming from AnalystCave.com! The Web Scraping Kit is a simple kit for VBA Web Scrapers, contains a set of ready examples for different scraping scenarios. The kit is equipped with several tools letting you leverage HTTP GET&POST, IE, proxies, XPath, Regex and more Web Scraping tools. Get […]

Web Scraping Proxy HTTP request using VBA

Visual Basic for Application (VBA) is great for making your first steps in Web Scraping as Excel is ubiquitous and a great training arena to learn Web Scraping. Web Scraping comes down to making HTTP requests to websites. At some point however you will find that some websites will cut you off or prevent multiple […]

Scrape Google Search Results to CSV using VBA

Google is today’s entry point to the world greatest resource – information. If something can’t be found in Google it well can mean it is not worth finding. Similarly SEO experts blog and write about how to optimize your web pages to rank best in Google Search results often ignoring other search engines which contribution […]